I was partially wrong promoting ‘experiment velocity’ as an important Innovation Accounting KPI. I’m gonna tell you why and what’s the fix. But first let me briefly tell you how I initially concluded that ‘experiment velocity’ was the Innovation Accounting KPI to rule them all.

Financial KPIs should not be used to measure new businesses. It is impossible for a nascent venture to compete with a mature one on financial KPIs . So a new dynamic/flexible KPI system needs to be created. Otherwise corporations will not be able to justify investments in new ventures.

But in the corporations defense: without a viable alternative to the tried and tested financial KPI system, and with a stringent need for taking investment decisions, they reverted to their comfort zone. At the end of the day, bottom line thinking has been at the epicenter of corporate growth for decades.

But with invalidated assumptions being the number one cause of businesses failing, something needs to be done. The lean startup mindset mythology, encourages product teams to test their assumptions before launching. As this mindset is gradually gathering followers in the corporate landscape, ‘experiment velocity’ looked like a good KPI alternative to the standard ROI.

So what is wrong with ‘experiment velocity’?

To begin with, ‘experiment velocity’ can be gamed, purposefully or not. Product teams knowing that they are having their ‘experiment velocity’ measured might claim that every tiny thing they do is an experiment. Or, giving them the benefit of the doubt, they don’t know how to design the right experiments so although their velocity is high, their impact is low.

Doing some innovation ecosystem design work for a blue chip company using Lean Startup as the mods-operandi for all product teams, I discovered ‘experiment velocity’ to sometimes be a misleading innovation accounting KPI.

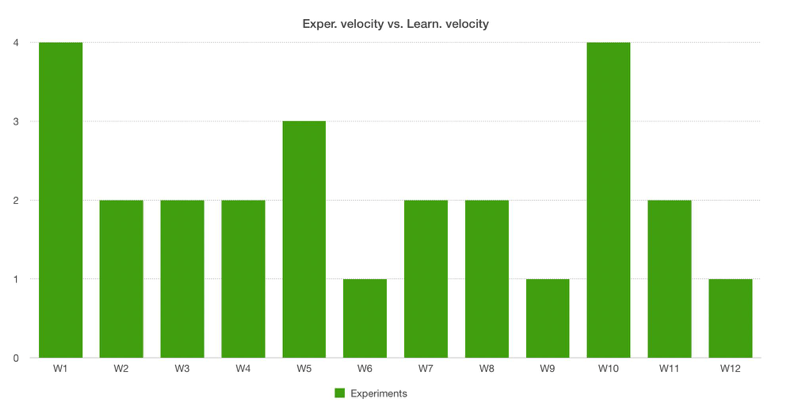

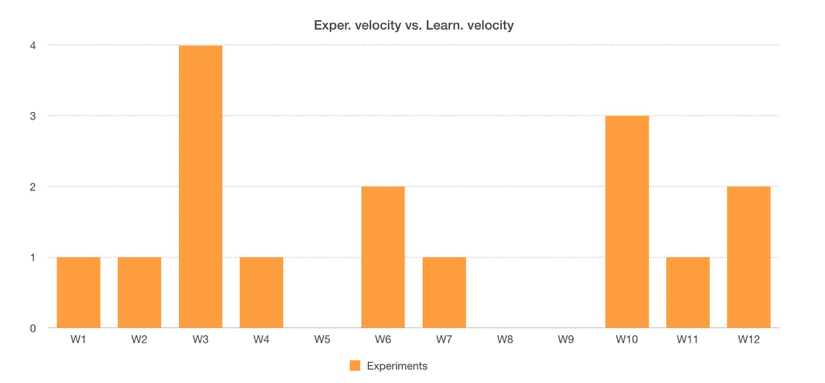

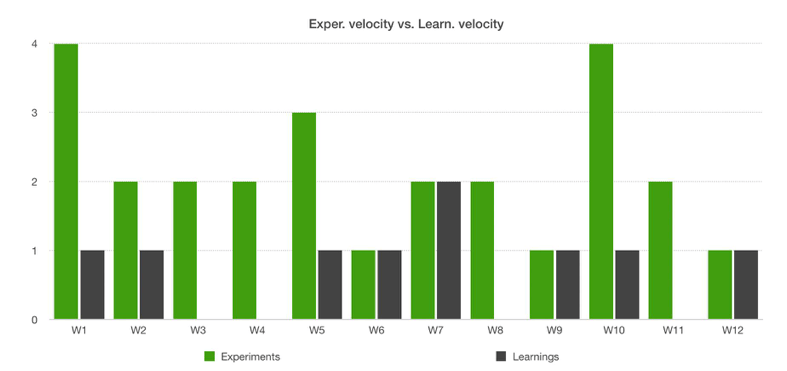

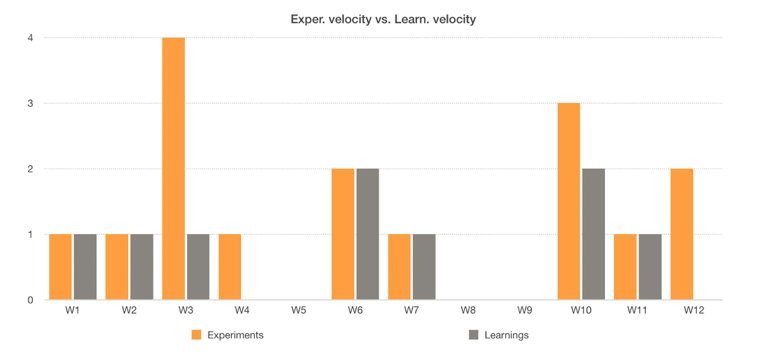

Look at the performance of these two teams:

It is obvious that Team Green did more experiments than Team Orange. The average ‘experiment velocity’ of Team Green was 2,1 experiments per week, while the average ‘experiment velocity’ of Team Orange was just 1,3. If one would consider only the ‘experiment velocity’ as a performance KPI, Team Green would, undoubtedly, be the winner.

But digging deeper I reached a very different conclusion. Looking at how many of these experiments have resulted in conclusive learnings I saw how ‘experiment velocity’ can sometimes point you to the wrong conclusion.

As you can see both teams reached the same average ‘learning velocity’, about 0,75 learnings/week.

As the goal of product teams is to get validated learning as fast as possible ‘learning velocity’ should outshine ‘experiment velocity’. But not replace it.

Although true that experiments lead to learnings, it’s quite hard to reach a 100% correlation. There are many reasons for experiments not generating conclusive learnings, but the outcome is the same: wasted time.

By looking at a team’s ‘experiment/learning ratio’ one can tell a lot about the team and what type of help they need, if any.

Consider a lower-than-1 ‘experiment/learning ratio’. This team might be running some multi level (funnel like) experiments where at each stage of the funnel they are gathering learnings. One thing to note in this case is that this team might run multi-variable experiments which, if not done right, can lead to misleading insights.

Now consider a greater-than-1 ‘experiment/learning ratio’ . This means that the team in question has performed more experiments than the number of learnings they got. This can suggest they are struggling to design impactful experiments so coaching may be required. Or, at the very worst, they are trying to ‘game system’ but having high ‘experiment velocity’ numbers.

An ‘experiment/learning’ ratio equaling 1, means a 100% correlation between the number of experiments a team has performed and the number of conclusive learnings they got. And this would be the optimal case.

Important to point out is that overtime, as teams mature, their experiment velocity drops. This is due to the experiments becoming more and more complex and requiring more prep. Hence measuring the ratio is more relevant than measuring the two velocities independently of each other.

With ‘runway’ being ‘time left’ divided by the ‘iteration length’ — ‘learning velocity’ trumps ‘experiment velocity’.

At the end of the day measuring innovation is not just about measuring velocities and rations. These are tactics and tactics are contextual while strategies are conceptual. The strategy for creating an innovation accounting system is to change the questions being asked. The questions need to be relevant to the maturity level of product under scrutiny

This article was initially published on The Future Shapers where I am a regular contributor.